Every once in a while, you stick your head above the parapet, fully expecting it to be blown off … yet you still do it

Based on my limited understanding of the problem, here goes:

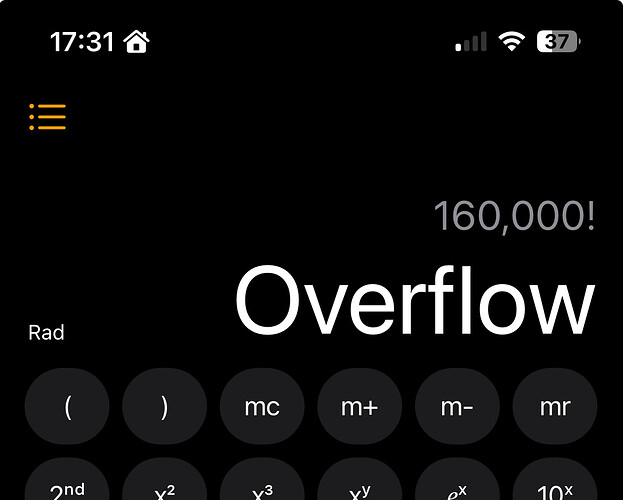

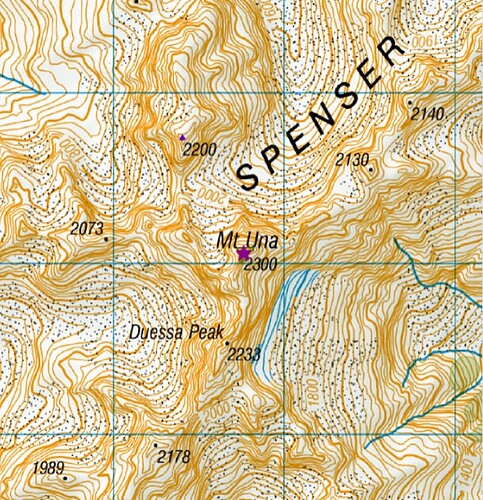

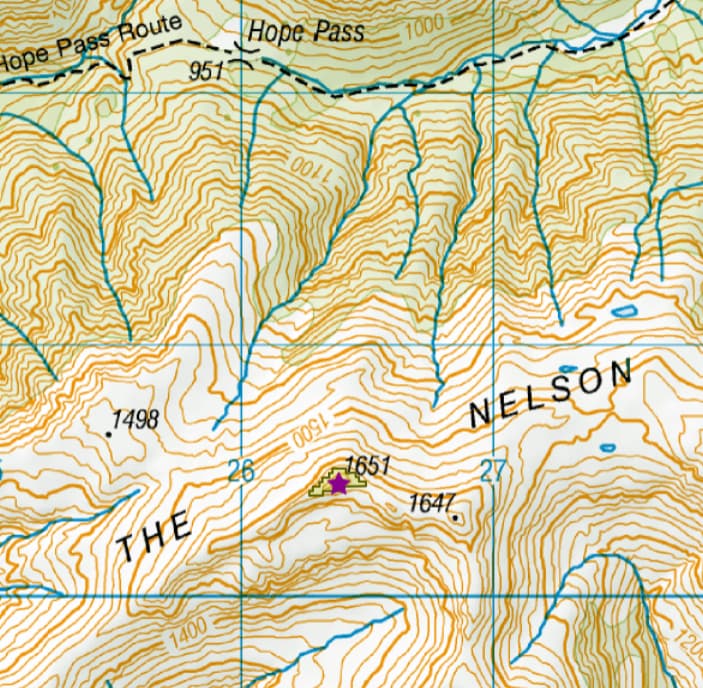

1 Assume we are going to do the full set of worldwide S2S calcs, comprising some 160,000 summits.

2 First, pull the relevant info (Lat, Long, name) from the SQL database into a flat CSV file.

3 For the distance calculations, we’ll use C++ as the standard library makes multi-threading notably straightforward. My Linux desktop has 12 hyper-threaded cores. We can use them all in parallel because the 12.8 billion distance calcs have no interdependency.

4 Create a simple C++ class object to represent a summit. Include data members for the input (which we’ll read from our flat file), and the result (pointer to 159,999 values?) .

5 Assuming 8-byte doubles for lat, long and distance, plus 20+ bytes for the name, and a bit of overhead, that’s about 50 bytes per object.

6 Total size 160,000 * 50 = 8,000,000 bytes ie, the whole lot fits easily into memory on a typical 8GB machine.

7 We use the C++ std::vector type to store all the objects - access times are super-fast compared to linked-lists etc.

8 Write out the results to a CSV file. Then sort …

Execution time hopefully rather less than 9 hours, but …

On reflection, storing then sorting 12.8 billion results could be somewhat more problematic than this …

Using 4-byte floats for the output, a simple binary results file would be 4 * 12.8 = 51.2 billion bytes.

73 Dave