Hi!

By this date, i’ll be 85 years old…

Who knows?

Gerald F6HBI

On Unix style systems the date is internally represented as a 32bit signed number as the number of seconds since the epoch. For Unix systems (inc Linux and Mac) the epoch is 1st Jan 1970.

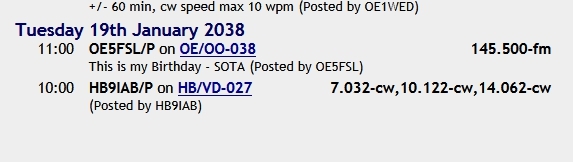

If you enter an invalid date, i.e. 12/31/2015 then internal value becomes 0x7FFFFFF. The result when you feed that value back into the date library code to convert it day/month/year is 19th January 2038. This is when the UNIX clock rolls over. This is same issue as the famous Year 2000 bug. As it was 38 years away when software was updated so that the 1999->2000 rollover problems were fixed, nobody could be bothered to fix 2038 issues. It’s now only 22 years away, so we have loads of time to prevaricate and ignore it.

At this point some “smart” person will comment that a huge amount of money was spent on Year 2000 issues yet when the moment came the world didn’t end, making the point that in some way, spending this money was a waste of resource. Of course the fact the money was spent and the issues fixed is why there were so few problems. But let’s not let facts ever get in the way of a good moan!

Let’s try it Gerald, i’ll be 92 then…but one never know what may happen…

73 gl cu, Heinz

A succinct summary. Having worked for a prominent Linux vendor for the past ten years or so I can add a little bit more colour.

On Linux systems, the time is now represented by a 64-bit number which should take us up to around the point the Sun swallows Earth. POSIX decrees that the interfaces to POSIX time_t types is only 32-bit, so even though the internal epoch time is 64-bits, using POSIX interfaces gives you 32-bit accuracy. The problem is therefore largely solved, it just needs a change to the API - which will probably take about 20 years, and people to stop using the older interfaces, which’ll take another 10. Damn.

As for 2038 problems not being an issue yet, in 2008 we had a raft of support tickets and issues around banks that were issuing 30 year loans and running into errors in calculations - mainly in the database layer, but some of it floating down into the OS layer. It led to some really interesting discussions around the ways you can use the time_t type in arithmetic - basically, use signed operations to set the overflow flag and then actually check the CPU flags. If overflow occurs, you can only rely on certain operations working correctly.

The fact the loans were largely being issued to people with no income, jobs or chance of repaying it made the 30 year term moot, of course.